Making data management responsible

7 July 2022

Automated decision systems

Computer scientist Sebastian Schelter takes his inspiration from a grand challenge that links the fundamental rights of citizens to data management systems: 'How can we build automated decision systems in such a way that people's fundamental rights are already respected by the design of the system?'

Schelter is assistant professor at of the Informatics Institute of the University of Amsterdam, working at the intersection of data management and machine learning in the INtelligent Data Engineering Lab (INDElab).

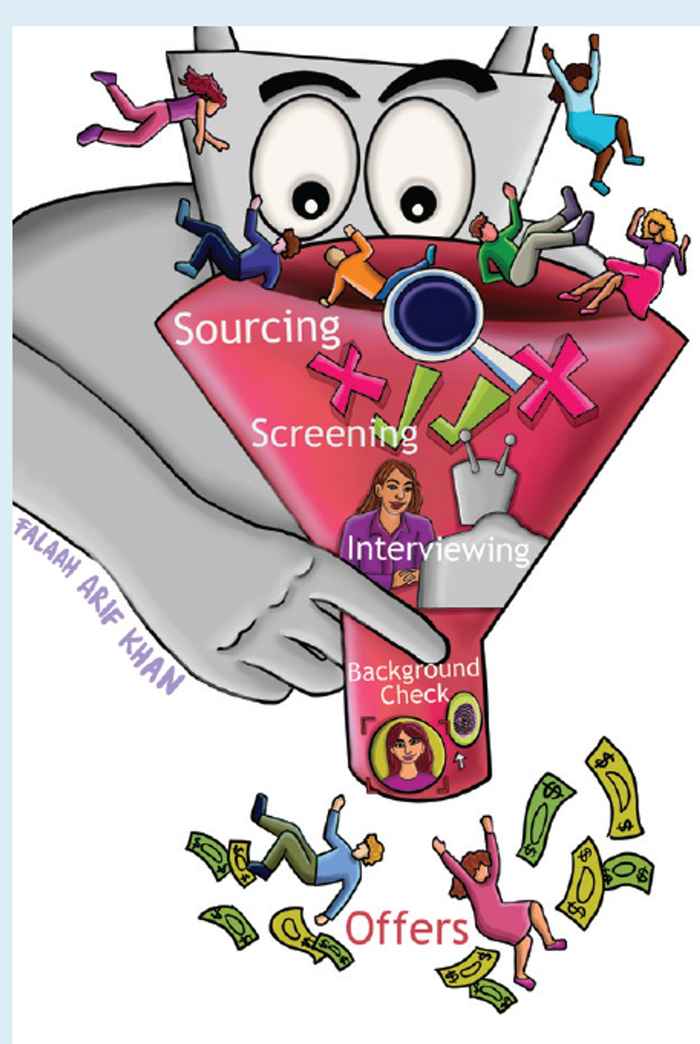

In recent years, more and more automated decision systems have been introduced into society: from systems that automatically select job candidates or automatically recommend products, to systems that automatically predict where certain crimes are going to happen or determine who can get which loan. Unfortunately, the application of such systems has led to various forms of discrimination, for example by gender, age, ethnicity or socio-economic status. A famous example is a hiring algorithm used by Amazon that showed bias against women.

Data life cycle

'Traditionally, the machine learning community has tried to fix such biases by focusing on the data that they directly train their system on', says Schelter. 'But this is only the last mile of the data life cycle. And it's not enough to build compliant systems. We need to look at all the stages before: How are the data collected? How are the data prepared? How long are the data valid and appropriate? That’s why we need to understand and manage the data life cycle.'

Together with four international colleagues Schelter published the article 'Responsible Data Management' in the June-issue of the Communications of the ACM, the flagship magazine of computer scientists in the world, the Association for Computing Machinery (ACM). 'In recent years there has been a lot of attention for responsible AI', says Schelter. 'While important, this is not enough. We also need responsible data management, and our article tries to make the computer science community aware of this.'

Automatic warning

Schelter himself focuses on one type of bias in his research: the technical bias. 'Technical bias is introduced by technical operations on the data, applied by data processing systems', he explains. 'Imagine you have a demographic database that contains information about people and the zip-codes of where they live. When you apply a zip-code filter on the data, then it looks like an innocuous technical operation only. But if you think a bit deeper, then you realize that somebody's zip-code might be strongly correlated with their socio-economic status, age or ethnicity. Such a filter operation might reduce the representation of people in the data.'

Schelter is developing systems that can automatically give a warning if a certain technical data preparation operation, like filtering data or joining data together, might introduce technical bias. And that's new. 'The database community has been using the relational model and algebraic query languages as a foundation for data processing', Schelter explains. 'For data preparation workloads aimed at machine learning there were no such algebraic representations, but we have built one. And we have shown that it can be used to make technical operations on the data more transparant.'

In the example of the zip-code, it can for example tell whether the zip-code operation has significantly changed the proportion of a certain demographic group. Then it is up to a human expert to decide whether or not this filtering is okay or not.

'We collaborate with a number of e-commerce companies, for example Bol.com', says Schelter, 'and they all have responsible data management issues because they have to comply with regulations like the GDPR. The systems that we develop can be applied in many of their use cases.'

Research Information

CACM-article 'Responsible Data Management'

Sebastian Schelter

The research described it this article falls within the scope of the research theme 'Data Science' of the Informatics Institute. And contributes to the following Sustainable Development Goals: SDG 10 Reduced inequality, SDG 5 Gender equality.